In many laboratory environments, cell counting is treated as a routine, almost trivial step, an operational necessity rather than a source of analytical risk. Yet this assumption is increasingly misaligned with the realities of modern biological workflows. As experimental systems grow more complex and downstream applications more sensitive, the notion that all counting approaches are functionally equivalent becomes difficult to defend.

The truth is that cell counting remains a significant, and often underappreciated, source of variability.

The Illusion of Standardization

At a glance, counting appears straightforward: prepare a sample, load it, and obtain a concentration and viability metric. Whether performed manually or with an automated cell counter, the output is typically reduced to a few key numbers. This apparent simplicity creates the illusion of standardization.

However, beneath this surface lies a chain of assumptions, each with the potential to introduce variability. Differences in sample preparation, staining, imaging conditions, and analysis algorithms can all influence the final result. When these variables are not tightly controlled, the output becomes less a measurement and more an approximation.

Pre-Analytical Variability: Where Error Begins

Variability often originates before a sample ever reaches an instrument. Subtle differences in handling can significantly affect cell integrity and distribution.

Time-to-analysis is a critical but frequently overlooked parameter. Delays between harvesting and counting can alter viability, particularly in primary or stress-sensitive cells. Temperature fluctuations, mechanical stress during pipetting, and inconsistent resuspension can further distort the sample.

Cell type adds another layer of complexity. Immortalized lines tend to be more resilient and forgiving, masking inconsistencies in technique. In contrast, primary cells and stem cell populations are highly sensitive to environmental perturbations. What appears to be a minor deviation in handling can translate into measurable differences in viability and concentration.

Analytical Variability: The Black Box Problem

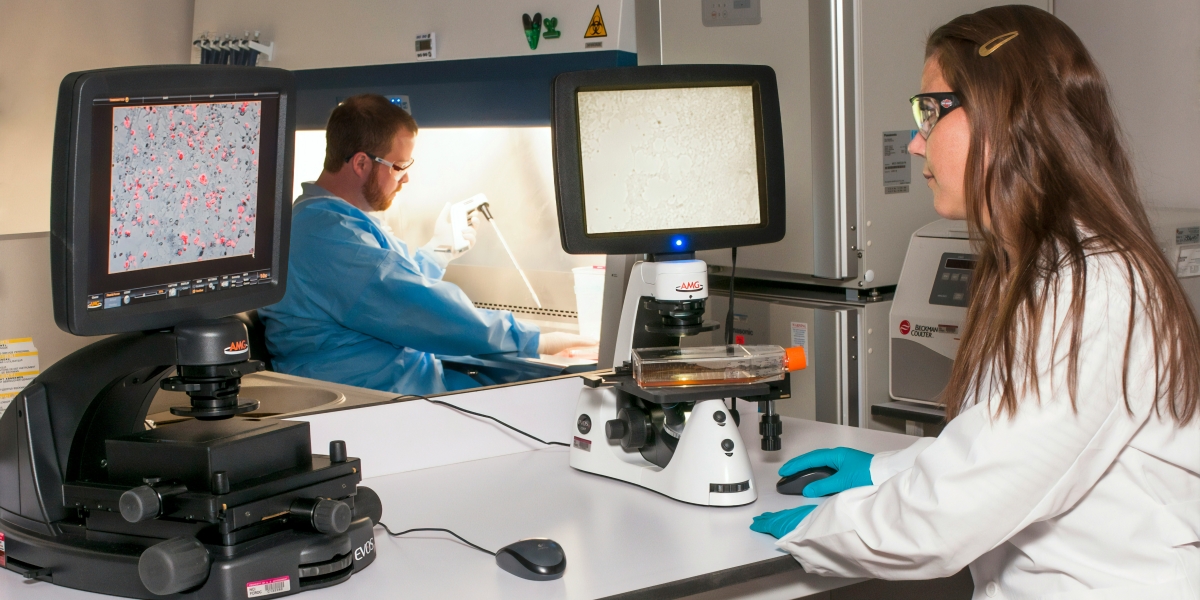

Once the sample is introduced into a counting system, a new set of variables comes into play. Even with an automated cell counter, the analytical process is not inherently objective. It is governed by embedded assumptions.

Image-based systems rely on thresholding and segmentation algorithms to distinguish cells from debris. These decisions, while automated, are not universally applicable across all cell types or conditions. Small changes in morphology, aggregation, or background noise can shift classification boundaries.

Debris discrimination is particularly problematic in heterogeneous samples. Dead cells, apoptotic bodies, and extracellular material can be misclassified, inflating or deflating counts depending on the analytical approach.

Clumping introduces further ambiguity. Aggregates may be counted as single events or partially resolved into multiple cells, depending on the system’s capabilities. In high-density samples, this effect becomes more pronounced, undermining quantitative accuracy.

The Limits of Viability Metrics

Viability assessment is often treated as a binary output (live or dead). In reality, it is a continuum.

Traditional dye exclusion methods capture membrane integrity but fail to account for early apoptotic states or metabolic dysfunction. As a result, cells that are functionally compromised may still be classified as viable.

More advanced fluorescence-based approaches expand this capability, enabling multiparametric assessments. Systems such as those developed by Logos Biosystems, including platforms like the LUNA-FX7, are designed to address these limitations by incorporating additional biological context into the counting process. However, even with these tools, interpretation remains dependent on assay design and experimental intent.

Operator and Workflow Effects

Even in partially automated environments, human factors persist. Variability in pipetting technique, sample mixing, and loading can influence results. In manual workflows, these effects are magnified, leading to significant inter-operator variability.

Automation reduces, but does not eliminate, these inconsistencies. Instead, it shifts the burden toward workflow design. Without standardized protocols governing sample preparation and handling, even the most advanced automated cell counter cannot fully compensate for upstream variability.

Why This Matters Now

The consequences of counting variability are no longer confined to minor experimental noise. In contemporary research settings, they can have downstream implications that are both scientific and operational.

In high-throughput screening, small inaccuracies can propagate across datasets, affecting hit identification and reproducibility. In cell therapy and regenerative medicine workflows, variability in viability and concentration can influence dosing, efficacy, and regulatory compliance.

As multi-site collaborations become more common, reproducibility across laboratories is increasingly scrutinized. In this context, cell counting transitions from a routine task to a critical control point.

Reframing Cell Counting as a Critical Measurement

To address these challenges, cell counting must be reframed, not as a commodity, but as a measurement requiring deliberate control.

This begins with standardizing pre-analytical workflows: consistent handling, defined timelines, and cell-type-specific protocols. It extends to selecting appropriate analytical tools, including automated cell counter systems that align with the biological complexity of the sample.

Most importantly, it requires recognizing that no single approach is universally optimal. The choice of method should be driven by experimental context, not convenience.

Cell counting sits at the foundation of countless biological workflows. Treating it as a solved problem introduces unnecessary risk into otherwise well-designed experiments.

By acknowledging and addressing the hidden sources of variability, laboratories can improve the accuracy of their measurements and the reliability of their downstream outcomes. In an era defined by precision and reproducibility, that distinction is no longer optional.