Not Another AI Assistant: Juma Aims to Deliver Ready-to-Use Marketing Assets

In a landscape crowded with chatbots and “assistants,” the leap from conversation to meaningful execution is where most solutions stall. Juma was built to cross that line decisively. It operates not as a novelty or a helper that drafts half-finished work, but as a production-grade system for marketers who need to research, strategize, create, and analyze with speed and rigor.

This article will unravel how Juma is not another AI assistant, but rather an engine for delivering ready-to-use marketing assets.

From Team-GPT to Juma

Juma represents the evolution of Team-GPT into a purpose-built platform for modern marketing organizations. What began as a powerful interface for leveraging large language models has matured into an end-to-end environment designed around the realities of campaign planning, content operations, and performance measurement.

The rebrand heralds an expanded mission. Team-GPT evolves into Juma, the ultimate AI workspace designed for marketing teams. Its remit is not merely to answer questions but to move work forward, unify stakeholders, and produce assets that are likely to be immediately deployable across channels.

An AI Workspace That Unites the Marketing Function

Marketing work requires cross-functional collaboration, shared context, and repeatable processes. Juma addresses these needs by uniting teams in one AI-native workspace where research, strategy, content creation, and analytics come together in a coherent flow. Juma is the AI workspace for marketing teams.

Juma unites marketing teams to research, strategize, create content, and analyze. Rather than handing off between disjointed tools and teams, the platform keeps the entire lifecycle in one place, preserving context and potentially accelerating iteration. Research informs strategy inside the same environment where content is developed, versioned, and approved, and where analytics close the loop with measurable outcomes.

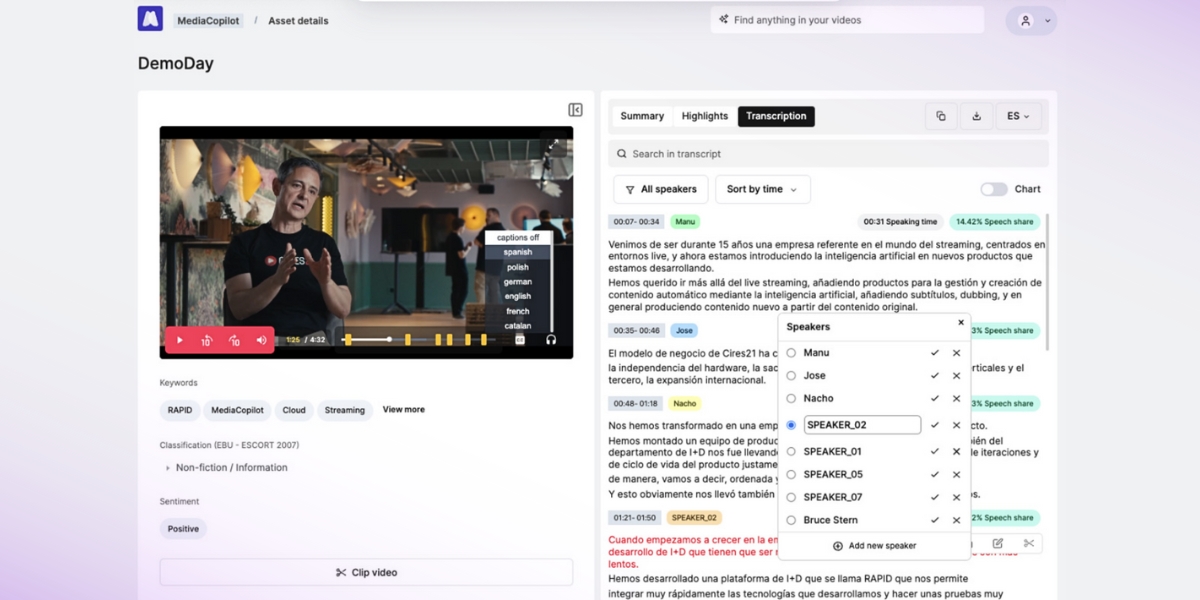

The Superagent Difference: From Chat to Completed Work

The distinction that matters most is Juma’s operating model. Juma is a superagent built for modern marketing teams. Unlike traditional AI assistants, Juma executes complete workflows autonomously, such as analyzing data, generating content, and delivering ready-to-use assets. That translates to practical advantages: campaign briefs arrive with audience insights already synthesized; social calendars are generated alongside post copy, visuals, and platform-specific variants; landing pages come with on-brand copy, SEO metadata, and A/B test ideas; performance reports update with clear narratives, not just raw charts. The value is likely to be immediate because the output is ready for immediate use.

Photo Courtesy: Juma

Research That Starts Strong and Stays Current

Effective marketing depends on timely insight. Juma’s research capabilities bring structure and speed to market scans, competitive reviews, and audience analysis. It surfaces patterns from both internal documents and external sources, and frames implications in a way strategists can act on.

The system’s memory of prior research helps ensure knowledge compounds over time rather than resetting with each new brief. As a result, campaign ideas are grounded in evidence, not guesswork, and messaging decisions are more likely to be justified with a clear rationale.

Strategy That Is Actionable by Design

A strategy is only as good as its path to execution. Juma translates research into structured plans: positioning statements, messaging architectures, channel guidance, and measurement frameworks that anticipate the work downstream.

Because the same system will create content and analyze performance, strategies are compatible with production from the start. This reduces friction, shortens timelines, and increases the likelihood that plans will survive real-world constraints without losing their intent.

Content That Ships Ready for Deployment

Where most assistants propose ideas or outline drafts, Juma produces finished assets with the fidelity and formatting teams expect. Long-form articles arrive with editorial structure, SEO optimization, and internal linking suggestions. Email campaigns include subject line variants, preheaders, body copy, and device-aware formatting. Paid ads come with creative concepts, headline options, and platform-fit dimensions.

All of it respects brand voice, complies with channel valuable practices, and is prepared for immediate publication or review. The result is a content engine that keeps pace with modern calendar demands without sacrificing craft.

Analysis That Closes the Loop

Measurement is integral, not an afterthought. Juma ingests performance data, interprets trends, and produces clear narratives with recommended actions. Reports emphasize what changed, why it matters, and what to do next.

Because the same system also creates content, feedback loops are tight: learnings translate quickly into new tests, revised messaging, and refined targeting. This cycle of analyze, adapt, and redeploy turns incremental improvements into sustained performance gains.

Summary

Marketing teams are under pressure to do more with less, to personalize without losing coherence, and to demonstrate impact with clarity. Tools that only accelerate drafting no longer suffice. What teams need is an AI-powered tool like Juma that turns goals into outcomes with fewer handoffs, fewer manual steps, and fewer compromises. Juma meets that need by uniting people, process, and production in one environment, and by taking responsibility for the full arc of work—from insight to asset to analysis.