By: Mark Kruckeberg

Artificial intelligence is dominating boardroom conversations. Yet as many organizations attempt to deploy it, an uncomfortable truth is emerging: most companies are trying to apply AI to operational environments that are already chaotic.

Organizations across industries are investing billions into AI platforms, machine learning tools, and advanced analytics in hopes of unlocking new efficiencies and competitive advantages. Yet behind the excitement, a quieter reality is taking shape.

Many AI initiatives struggle to move beyond pilots or isolated experiments. Projects that begin with enthusiasm often stall before delivering meaningful enterprise impact.

Recent research from the Massachusetts Institute of Technology highlights what it calls the “GenAI Divide,” finding that despite billions in investment, the vast majority of organizations, as many as 95%, are seeing little to no measurable return from AI initiatives due to challenges in workflows, data, and operational alignment.

In conversations with executives across industries, one pattern appears repeatedly: companies are attempting to introduce artificial intelligence into environments that were never designed for it.

In other words, they are trying to automate chaos instead of fixing it.

Artificial intelligence does not operate independently of the enterprise. It learns from the way an organization already works, from the data it collects, the workflows that govern execution, and the rules that guide decision-making.

When those foundations are fragmented or inconsistent, AI does not resolve the problem. It accelerates it.

Most organizations today operate across a complex landscape of systems layered over decades: ERP platforms, CRM applications, departmental databases, spreadsheets, and manual processes stitched together through email approvals and informal workarounds. Over time, these environments accumulate operational friction. Data definitions drift across systems, workflows diverge between teams, and governance exists in policy documents rather than in the systems where work actually occurs.

For years, organizations have learned to operate within this complexity.

Artificial intelligence exposes it.

AI systems rely on consistent patterns in both data and execution. If customer records differ across systems, if product definitions vary between departments, or if critical operational decisions depend on manual spreadsheets, the technology struggles to produce reliable outcomes. Instead of creating clarity, it amplifies inconsistency.

At its core, this is not a technology problem. It is an execution maturity problem.

Artificial intelligence reflects the maturity of the operational environment in which it operates. When workflows are clearly defined, data is governed and consistent, and decision logic is embedded directly into operational systems, AI can dramatically improve productivity and decision quality.

When those elements are weak or fragmented, the opposite occurs.

Organizations end up scaling inefficiency rather than eliminating it.

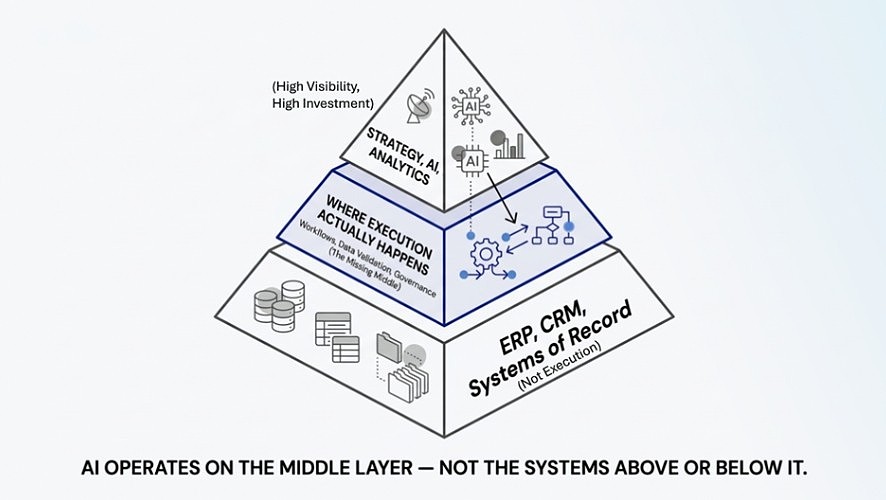

The Missing Middle

One way to understand this challenge is what can be described as the “missing middle” of enterprise execution.

Most organizations invest heavily at two levels. At the top, they define strategy, goals, and transformation initiatives, including artificial intelligence. At the bottom, they rely on systems of record such as ERP and CRM platforms to store and process transactions.

What is often missing is the operational layer between: the environment where work is actually executed, decisions are made, data is validated, and governance is enforced in real time.

This “middle” layer is where workflows either succeed or break down. It is where data quality is determined, where business rules are applied, and where execution consistency is either achieved or lost.

When this layer is fragmented or unmanaged, organizations experience the exact symptoms many associate with failed AI initiatives, inconsistent data, manual workarounds, and unpredictable outcomes.

Artificial intelligence does not fix this gap. It exposes it.

What Leaders Should Do Next

For executive teams exploring artificial intelligence, the implication is clear: AI strategy should not begin with technology selection. It should begin with operational readiness.

That starts by addressing the missing middle, the layer where workflows, data, and governance intersect. Leaders must examine how work actually flows through the enterprise, how data is created and validated at the point of execution, and how decisions are enforced consistently across systems.

Increasingly, forward-thinking organizations are treating AI readiness as a diagnostic discipline, one that evaluates execution maturity before automation is introduced at scale.

This means strengthening workflows before automating them. It means ensuring data is validated at the moment it is created, not corrected downstream. And it means embedding governance directly into execution, rather than relying on policies that operate outside of it.

These may not be the most visible investments in the age of artificial intelligence, but they are the ones that ultimately determine whether AI initiatives succeed or stall.

As organizations continue exploring the possibilities of AI, an important truth is becoming clear.

The companies that succeed with artificial intelligence will not necessarily be the ones that adopt the newest technologies first.

They will be the ones disciplined enough to fix how work actually gets done and how data is validated, before asking machines to do it faster.

About the Author

Mark Kruckeberg is a Managing Partner at Soltec, a consulting firm focused on operational transformation, AI readiness, and data governance. Soltec works with organizations to improve workflow execution, strengthen data quality, and prepare enterprise environments for artificial intelligence using the Manch Centralized Orchestration Platform. Learn more at www.soltecinc.com.